Beyond Flatland: User Interface Design for VR

Posted; November 5, 2019

Explorations in VR Design is a journey through the bleeding edge of VR design – from architecting a space and designing groundbreaking interactions to making users feel powerful.

Art takes its inspiration from real life, but it takes imagination (and sometimes breaking a few laws of physics) to create something truly human. With Ultraleap's Interaction Engine 1.0, VR developers now have access to unprecedented physical interfaces and interactions – including wearable interfaces, curved spaces, and complex object physics.

These tools unlock powerful interactions that will define the next generation of immersive computing, with applications from 3D art and design to engineering and big data. Here’s a look at Ultraleap’s design philosophy for VR user interfaces, and what it means for the future.

Escape from Flatland

In the novel Flatland, the life of a two-dimensional shape is disrupted when he encounters a creature from another dimension – a Sphere. The strange newcomer can drop in and out of reality at will. He sees flatland from an unprecedented vantage point. Adding a new dimension changes everything.

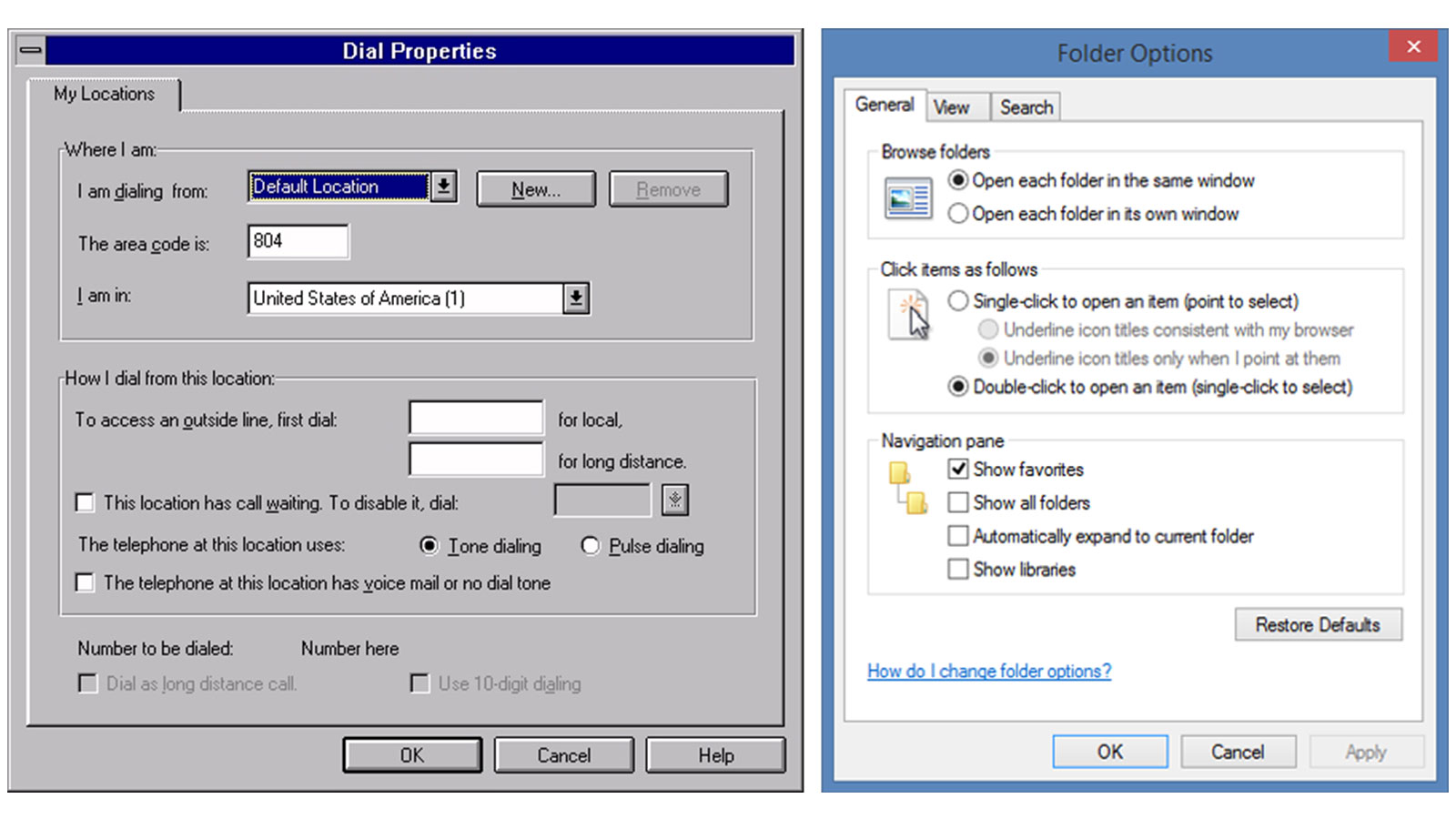

In much the same way, VR completely undermines the digital design philosophies that have been relentlessly flattened out over the past few decades. Early GUIs often relied heavily on skeuomorphic 3D elements, like buttons that appeared to compress when clicked. These faded away in favor of color state changes, reflecting a flat design aesthetic.

Many of those old skeuomorphs meant to represent three-dimensionality – the stark shadows, the compressible behaviors – are gaining new life in this new medium. For developers and designers just breaking into VR, the journey out of flatland will be disorienting but exciting.

Skeuomorphic design is unlikely to be the answer for interfaces in VR, but the core physicality that serves as the basis for these systems may be a useful guide for early users of spatial computing. A person’s first reaction will be to reach out and touch the digital world. At least in the early phases of spatial interfaces, they should expect a physical interaction in return.

VR design will converge on natural visual and physical cues that communicate the structure and relationships between different UI elements. “A minimal design in VR will be different from a minimal web or industrial design. It will incorporate the minimum set of cues that fully communicates the key aspects of the environment.” A common visual and physical language will emerge, much as it did in the early days of the web, and ultimately fade into the background. We won’t even have to think about it.

The Evolution of Our User Interface Widgets

Our user interface tools have been continuously refined over the past several years based on relentless optimization and user testing. The previous iteration of this toolset, the UI Input Module, provided a simplified interface for physically interacting with World Space Canvases in Unity’s UI System. This made it possible for users to reach out and “touch” UI elements to interact with them.

The Interaction Engine 1.0 involved a major transition for our UI tools. Instead of being a standalone module, UI input is now handled within the Interaction Engine – opening up greater physical interactions and massive performance optimizations for mobile and all-in-one VR.

We’ve also introduced massively expanded support for curved spaces and interfaces, which reflect how human beings actually move in 3D space. Ultraleap's Graphic Renderer is a general-purpose tool that can curve and render an entire interface with a single draw call. These capabilities are all on display with our Button Builder example, which showcases our newly released graphical optimizations and physical UI elements, all within a curved space:

But what if you want a more fluid and flexible interface that can travel through the world with you? The Interaction Engine can handle that too – with wearable interfaces.

Wearable Interfaces

Ever wanted to be a cyborg? In some ways, everyone with a mobile phone already is part human, part machine. In the real world, our tools are increasingly becoming parts of ourselves. In VR, we can augment our digital selves with new capabilities.

A simple form of this interface can be seen in Blocks, which features a three-button menu that allows you to toggle between different shapes. It remains hidden unless your left palm is facing towards you:

In addition to traditional user interfaces, the Interaction Engine includes robust support for wearable user interfaces. One example lets you pluck an interface from your hand and throw it. This spawns a freestanding interface that could be used as an artist’s palette, engineer’s toolkit, or data scientist’s control panel:

At Ultraleap, we play with human expectations to create compelling user experiences that point towards a future where “the digital” is no longer abstract, but something we see and experience in the real world. These wearable interfaces and UI tools are just the latest step – and now they’re in your hands.

All design is a form of storytelling. To take your users out of flatland, you need the right narrative to drive their interactions and help them make sense of their new universe.