Handy applications for Leap Motion Controller 2

Posted; August 29, 2023

All hands on deck! Desktop software that’s ready to go with Ultraleap’s newest tracking camera

The Leap Motion Controller 2 is a small, sleek addition to your desktop with enormous possibilities.

Ultraleap hand tracking can make almost any interface more precise, expressive, and accessible. We have collated some applications which employ hand tracking for music, gaming, productivity, and more.

Some of these require a little more technical knowledge to get started than others. If you are already an avid V-Tuber/music producer/virtual pilot/whatever, you will likely be comfortable with this. However, if you are just getting started, bear in mind that there might be a bit of a learning curve.

Here are our recommendations:

Music

By translating hand position and movement into MIDI CC (Control Change messages), you can change synth parameters, virtual instrument expression, track volume, and anything else you can think of in a virtual instrument or DAW. Hand tracking is more intuitive than turning a knob or moving a slider, and it also outdoes physical controllers by allowing you to modify many parameters at once. Use various axes and hand movements to control several aspects of your music quickly and comfortably. Three programs that allow this are:

MIDIPaw

MidiPaw has a fun and friendly interface, and allows saving of presets and rules. It supports mapping of a interesting variety of gestures and movements to MIDI CC.

Windows only

Difficulty: Medium

GECO Midi

Windows and Mac

Difficulty: Medium

NB: in both of the above cases, a third-party program such as LoopMidi might be necessary to use them on Windows as Windows lacks native support for virtual MIDI controllers.

Mimu Glover

Mimu Glover also allows virtual instrument expression via hand movement and shapes, but its support for learned gestures and recognition of individual finger positions allows deep and subtle control. Hand tracking is part of an entourage of supported sensors, including the BBC Micro Bit and even your mobile phone.

Glover separates itself from the pack with its graphical gesture-to-MIDI mapping. It also doesn’t require additional software to work as a MIDI device - it can be routed straight to a stand-alone soft synth or DAW.

Windows and Mac

Difficulty: Medium

Gaming

Controllers dominate in modern gaming, but for many they are a barrier to entry. Hands are the original controller! Hand tracking provides a more natural way to interact with the in-game environment, and also dials up the immersion in VR. The Leap Motion Controller 2 also eliminates the need for specialist hardware that is only used for one purpose, such as throttles for flight sims.

Microsoft Flight Simulator

YouTuber SimHanger Flight Simulation has created an extremely detailed and informative demo on flying a virtual plane with your real (tracked) hands. Technically, you can use your hands with anything supporting OpenXR hand tracking, but this video focuses on Microsoft Flight Simulator. Despite referring to the original Leap Motion Controller, the instructions will work with the Leap Motion Controller 2.

Windows only

Difficulty: Medium

See video for setup details

driver_leap

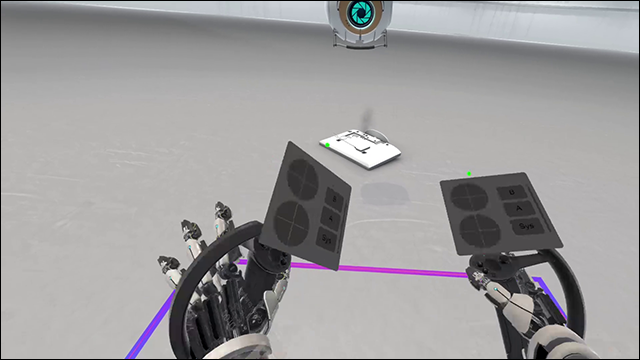

At the more technical end of things, Driver_Leap is an add-on for SteamVR which enables use of Ultraleap Hand Tracking. The upshot of which is that you can play games such as Half Life: Alyx without controllers (as @sadlyitsbradley is doing here). Some confidence with editing config files would help here, but it doesn’t require you to build anything yourself, and the instructions are comprehensive.

Windows only

Difficulty: Hard

Follow linked instructions for setup details

VTubing

V-Tubing is mapping your physical movements onto a digital avatar with motion capture, and live-streaming it - when you talk, they talk; when you smile, they smile.

The benefits of hand tracking here are obvious – when you move your hands, your avatar moves their hands, with all the extra dimensions of expression that allows. If you are already familiar with V-Tubing, I won’t be telling you anything new. But those who find the idea of digital cosplay appealing, or even just want a cuddly cartoon animal to talk for them on Teams calls, the following apps let you get started with very little effort:

Animaze

Animaze uses your webcam to map your facial movements to an avatar. Then Animaze shows up as a webcam device which you can select in recording/streaming software.

A few avatars are available for free (although they are still incredibly detailed). Users familiar with 3D modelling can tweak them for better results. Ultraleap tracking is supported out of the box (instructions here).

Windows only

Difficulty: Easy

VSeeFace

VSeeFace is a similar offering. The setup is similarly easy, and Ultraleap tracking works instantly. The quality is more like anime than the 3D anthropomorphic animal avatars of Animaze, but it has some nice features like resetting your avatar’s position so you don’t have to face the webcam head-on.

Windows only

Difficulty: Easy

Ultraleap Widgets!

Explore our blogs, whitepapers and case studies to find out more.