Enterprise VR: Unlocking New Business Use Cases

Posted; December 1, 2020

Many virtual reality enterprise users are unfamiliar with VR controllers. They're also time-poor. In this blog, we look at how the option to interact using hand tracking reduces the learning curve – and by doing so, unlocks new enterprise VR use cases.

Imagine you’re in a brainstorming session in a virtual meeting space. You want to write on a post-it. Sounds simple? But before you can start writing, you need to press three controller buttons to spawn the post-it, then go into a menu system, then select pen mode.

Now imagine this situation under the time pressure of a one-hour meeting. Then add some people who’ve never used a VR collaboration tool before.

They may not even have had any experience of VR controllers. Or even hand-held controllers. Not everyone is a gamer – there’s no guarantee a customer or time-poor C-suite executive will find button pushes intuitive.

It’s a simple example, but it illustrates just how low some enterprise users’ tolerance of a VR learning curve is likely to be.

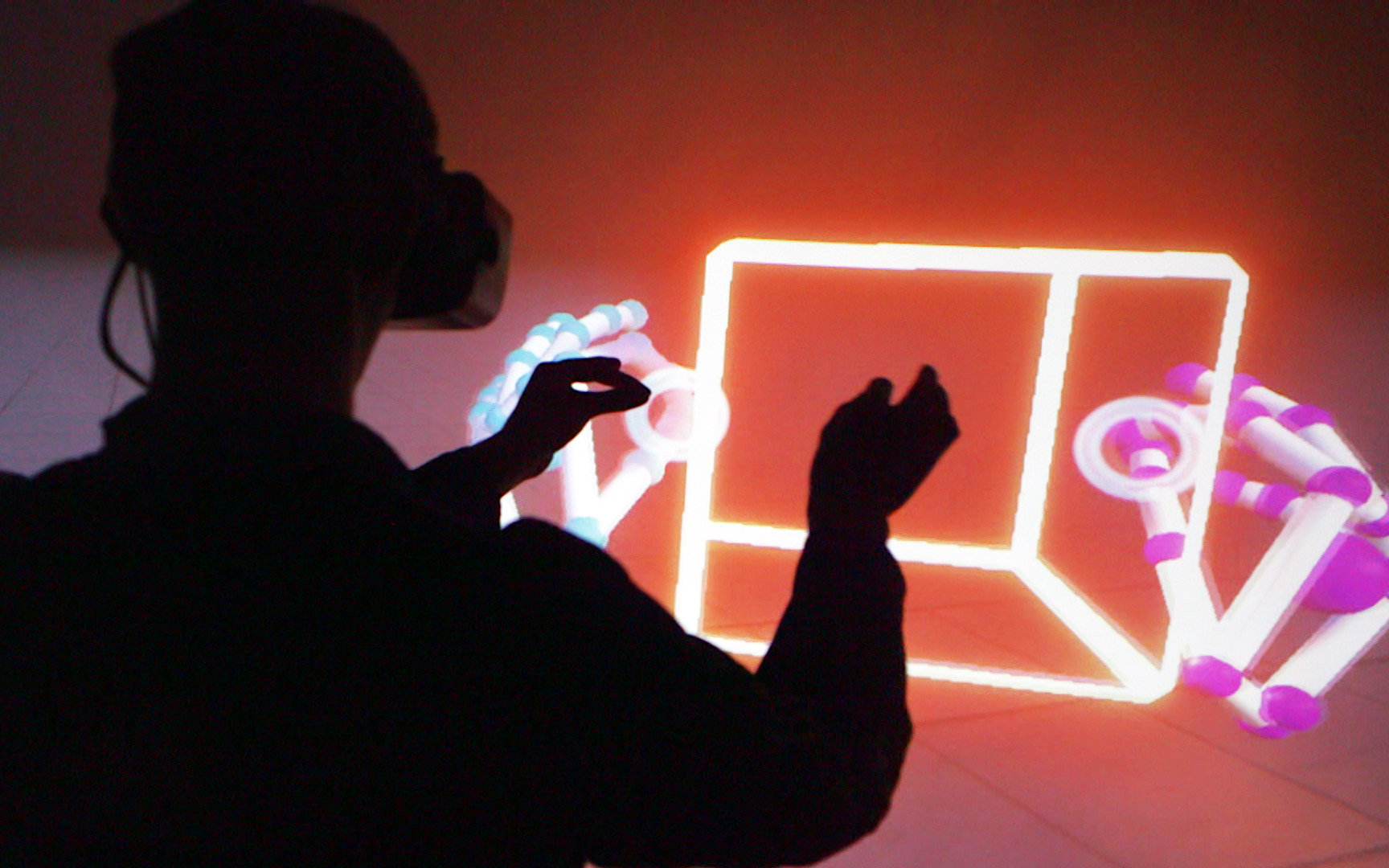

Now think how different the situation would be if your hands were accurately represented in real time and able to directly manipulate virtual objects. Instead of spawning a post-it by pressing a button, you would simply pick one up from a stack, pick up a pen, and start writing or sketching.

That sort of approachable, low-friction interaction is one of the key benefits hand tracking brings to virtual reality for enterprise.

Enterprise VR needs hybrid interaction

The evolution of input methods in 2D computing gives us some clues about how interaction will develop in enterprise VR.

Starting with simple taps, touch gestures have evolved to become ubiquitous in 2D computing. Today, swipes and pinch-zoom have become instinctive for most people. This includes those, such as the very young, who struggle to use other input methods such as keyboard/mouse. Touch gestures reduce the learning curve and have created more efficient workflows.

Not every interaction is best done using gestures on a touchscreen. We operate in a hybrid world where we switch fluidly between touchscreen, keyboard/mouse, game controller, and more. We use the right tool for the right job.

It’s the same in enterprise virtual reality. VR controllers will always have a place (for example, in expert applications such as hands-on product design).

But VR controllers aren't optimal for all interactions. In use cases such as VR remote collaboration, menu launchers, training, medical rehabilitation, or design reviews, hand tracking adds significant value because of the way it lower the barriers to entry.

How does hand tracking lower the barriers to entry in enterprise VR?

A recent study compared completing a task using hand tracking to using two different versions of VR controller. It found that hand tracking allowed users to focus mental effort on the task rather than how to operate the controllers.

This is because hand tracking enables direct interaction (or manipulation) in VR.

Direct manipulation means that you can see and act directly on elements of an interface using physical movements. When you touch and drag an app from one place on your smartphone to another, that’s an example of direct manipulation. The concept is fundamental to 2D graphical user interfaces (GUIs).

Find out more about Ultraleap's hand tracking

Direct manipulation was originally coined as a term in the early 1980s in contrast to command-line interfaces, such as Microsoft BASIC. In contrast, in a command line interface, users have to remember and type in a set of commands to operate the computer.

Users find direct interaction more intuitive. It’s generated design trends such as skeuomorphism, which is when digital objects resemble real-life ones (e.g. your trash folder), and multi-touch gestures (e.g. pinch-zoom on touchscreens).

Integrating hand tracking into VR applications takes direct interaction into a new phase. It means that VR developers can draw on rich, three-dimensional real-world affordances when designing user experiences.

With direct interaction, to decide if a gear stick in a car is placed at the correct location, you can just reach up and determine if the CAD is correct. In some applications there are even features to do ergonomic assessments of products.

R3DT’s VR tool for designing assembly lines uses Ultraleap's hand tracking and direct interaction to allow a customer or executive with no prior experience of VR to interact, provide comments, or even reach out and move components. Its creators see this as one of the tool's key selling points.

The challenges of hand tracking in enterprise VR

The biggest challenge of hand tracking in enterprise VR is that quality of the hand tracking is hugely important. Things that in VR gaming are merely annoying have measurable business impact in enterprise VR.

Perceptible latency, hands that appear too slowly, or hands that disappear when they come too close together are extremely frustrating for users. Designing for hand tracking in VR is also different to designing for VR controllers. Simply mapping controller interactions onto hands is unlikely to result in a good user experience.

This makes not just hand tracking, but high-quality hand tracking essential to developing a successful enterprise VR application.

Ultraleap's enterprise-grade VR hand tracking solutions

Ultraleap’s hand tracking technology is the world’s leading resource for hand tracking. It's based on 10 years and 5 generations of innovative software, over 150 patents, and a community of over 350,000 developers.

Our fifth generation of software, known as Gemini, comes integrated with Varjo’s latest human-eye resolution headsets for professional XR – the XR-3 and VR-3. It's also pre-integrated and optimised on the Qualcomm Snapdragon XR2 5G reference design.

Ultraleap's hand tracking is compatible with Unity, Unreal Engine and OpenXR. Detailed VR design guidelines and advanced tooling (such as our Interaction Engine) support the development of hand tracking solutions for enterprise VR.

Enterprise applications are a huge area of opportunity for VR. We're already seeing how quality hand tracking can be used to widen the enterprise user base – and we're looking forward to what comes next.

Ultrahaptics

Explore Ultraleap's solutions for enterprise VR

Ready to move beyond?

Explore our blogs, whitepapers and case studies to find out more.